Featured Products

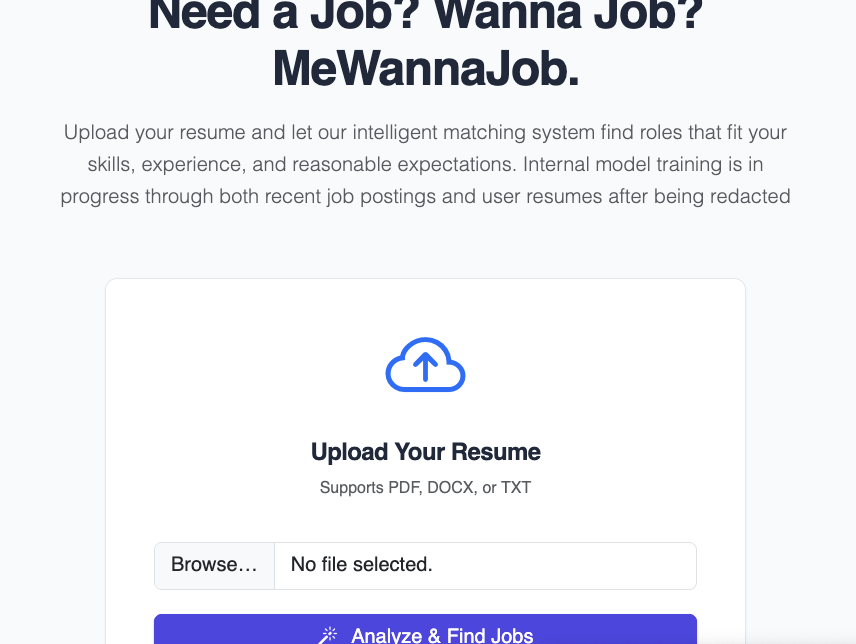

Me Wanna Job

Data-first job exploration platform built on top of the open jobpool.live dataset, with resume parsing, CSV Lab query workflows, dashboard views, and downloadable listing snapshots.

cider-cli

Python CLI for CIDR-oriented visualization and network exploration workflows, focused on quick analysis and readable outputs.